How to Use AI Without Losing Your Mind

A common-sense, hype-free guide to using AI to become more effective

Over the last 18 months, some people have hit another gear with AI. They’re moving faster and shipping more without sacrificing quality.

But thanks to the relentless AI hype machine, many others have not only failed to improve, but have actually gone backward. They’ve lost focus, wasted time, and shipped slop that undermines the trust of the people they work with.

The difference between these two groups is having a thoughtful answer to three questions:

What problems should I solve with AI?

How should I interact with AI?

Which tools should I use?

So, let’s try to answer them.

1. What problems should I solve with AI?

The world is currently full of “productivity theater”.

People design intricate agentic workflows that solve problems that didn’t need solving. People spend the weekend vibe-coding an app that they could have paid $2.99 for.

This is fine. Great, even! Many people have fun building this kind of thing, and by doing it, they’re helping us learn about all the new stuff.

But it is not actually making them more effective. If effectiveness is your goal, the first test of which problems are a good fit for AI is simply “is it big enough?” Does it actually take a lot of your time? Is it something you must do repeatedly? A surprising number of people are failing this test today.

There is a second, more subtle test that even more people are failing. That is understanding whether the work is about execution or about exploration.

One way to understand this point is to watch the debate currently happening on X about whether AI will kill spreadsheets. Many people in tech are convinced it will, but many in finance think they’ll never die. What is happening here?

Spreadsheets are used for two very different things:

“Mini software”: dashboards, inventory trackers, marketing attribution, and thousands of other hacked-together apps

A decision-making tool: financial models, ROI models, scenario models

The first case is about execution. All you care about is accurately charting a metric or keeping track of inventory, and spreadsheets are a quick way to do that. AI will indeed replace a lot of this because the spreadsheet is just janky software, and AI will make it easy to build non-janky software.

But in the second use case, the goal is actually exploration. When you set out to build a financial model, the whole point is that you don’t actually know yet whether you should invest in the company yet. You have to go through the process of collecting the inputs, building the model, tweaking it, adding to it, playing with sensitivities. AI can’t automate this because you don’t even know what the right answer is until you get into it.

A lot of work is exploratory. Take writing, for example: sometimes you just need to fire off an email or fill out a form. Hopefully, AI automates all of that. But if you’re writing a strategy doc or an essay or your senior thesis, the point is that you have to do the writing yourself to figure out what you’re trying to say. Writing is how you learn.

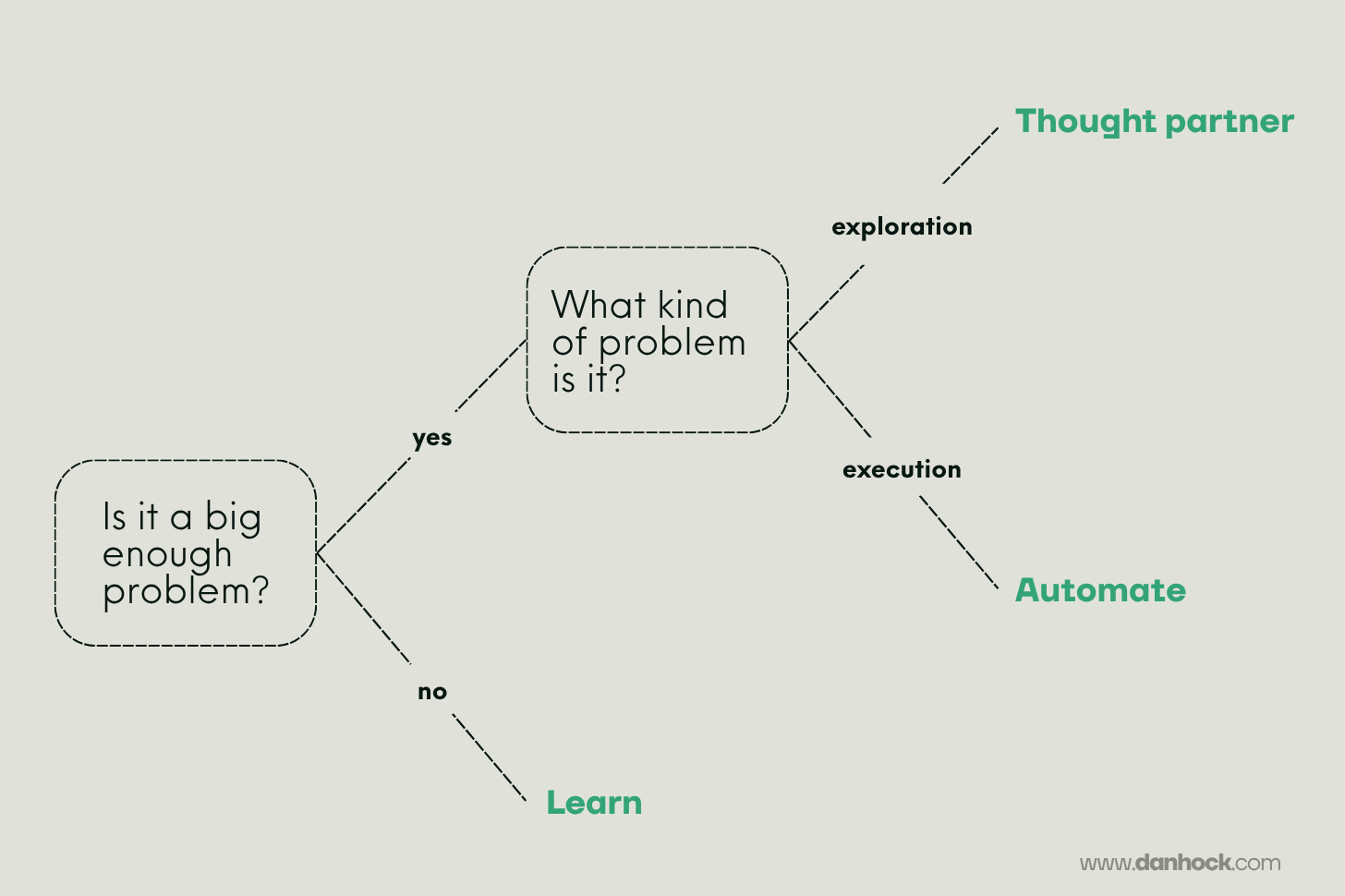

So there is a basic decision tree for how you should apply AI to different kinds of problems:

If the problem is not big enough to be worth your time, you can use it to learn about new tools, but that’s about it. For most people, building an agent to give them a “morning briefing” that summarizes their calendar and the news and the weather is not worth doing, unless they want the practice.

If the problem is big enough, and if the goal is simply execution, that’s a good candidate for automation. This would include an engineer writing the actual code, an analyst writing SQL, or a marketer generating tons of ad copy to test. Pretty soon, people will be spending very little time on these kinds of problems.

But it turns out that a lot of the most important work — the stuff that actually separates great people from mediocre ones — is in a third category. These are big, exploratory problems. This could be writing a strategy doc, designing an org chart, or deciding which features to launch. It is not possible to automate this work. Instead, we can best think of AI as a thought partner.

Which brings us to our second question:

2. How should I interact with AI?

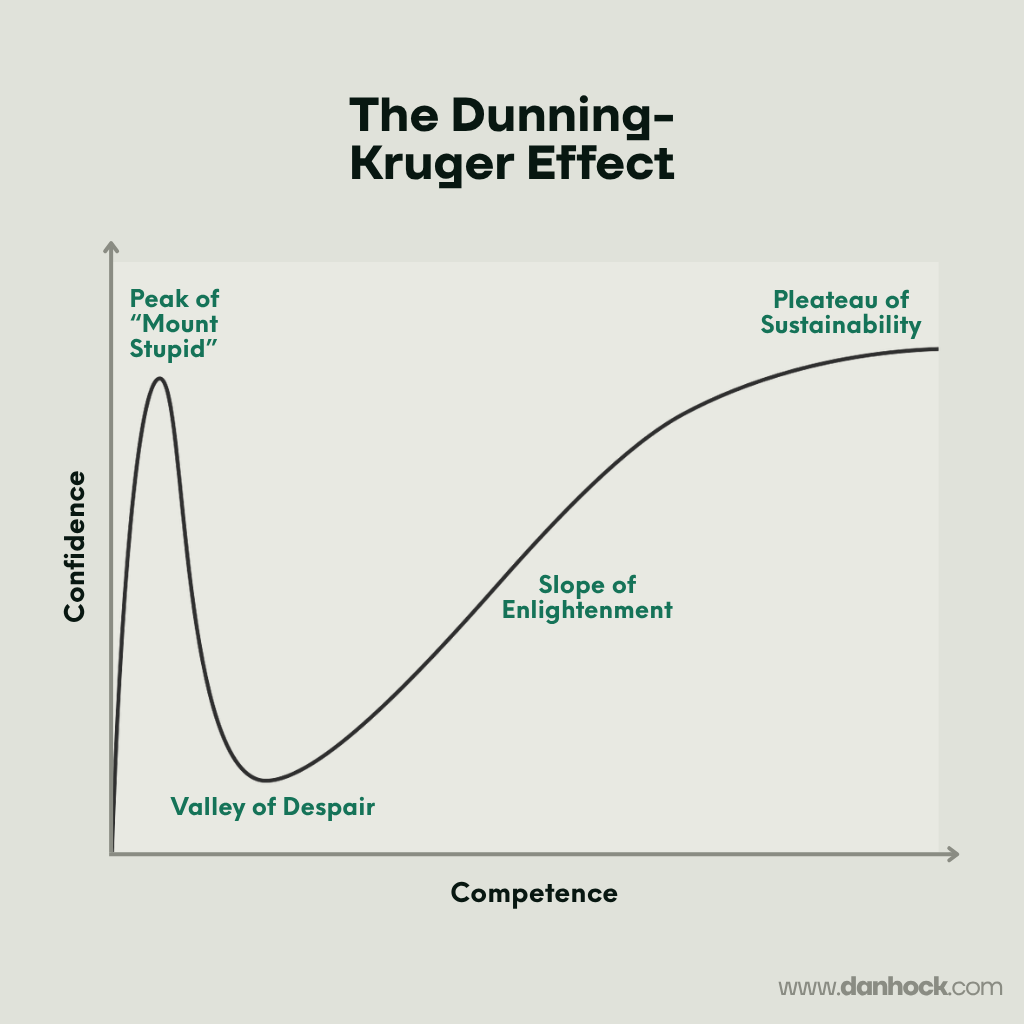

Your mind will go through three phases as you navigate any sufficiently large, exploratory project:

1. “Wow, this is simple. It will be easy!”

2. “This might be harder than I thought. I’m getting conflicting information and running into real tradeoffs.”

3. “I’m starting to make sense of things. It seems simple again, but this time I actually understand it.”

Each project is essentially a microcosm of the Dunning-Kruger effect, in which those with low competence have high false confidence, this evaporates as they start to learn more, and then they slowly rebuild true confidence.

The temptation is to use AI to skip over the “valley of despair” entirely. You could come up with a reasonable scope for the problem, ask Claude some reasonable questions, and then just accept its reasonable-sounding answers.

The problem is that the initial answer is likely wrong in meaningful ways. And you didn’t learn anything through the process that would let you reason about where it is wrong.

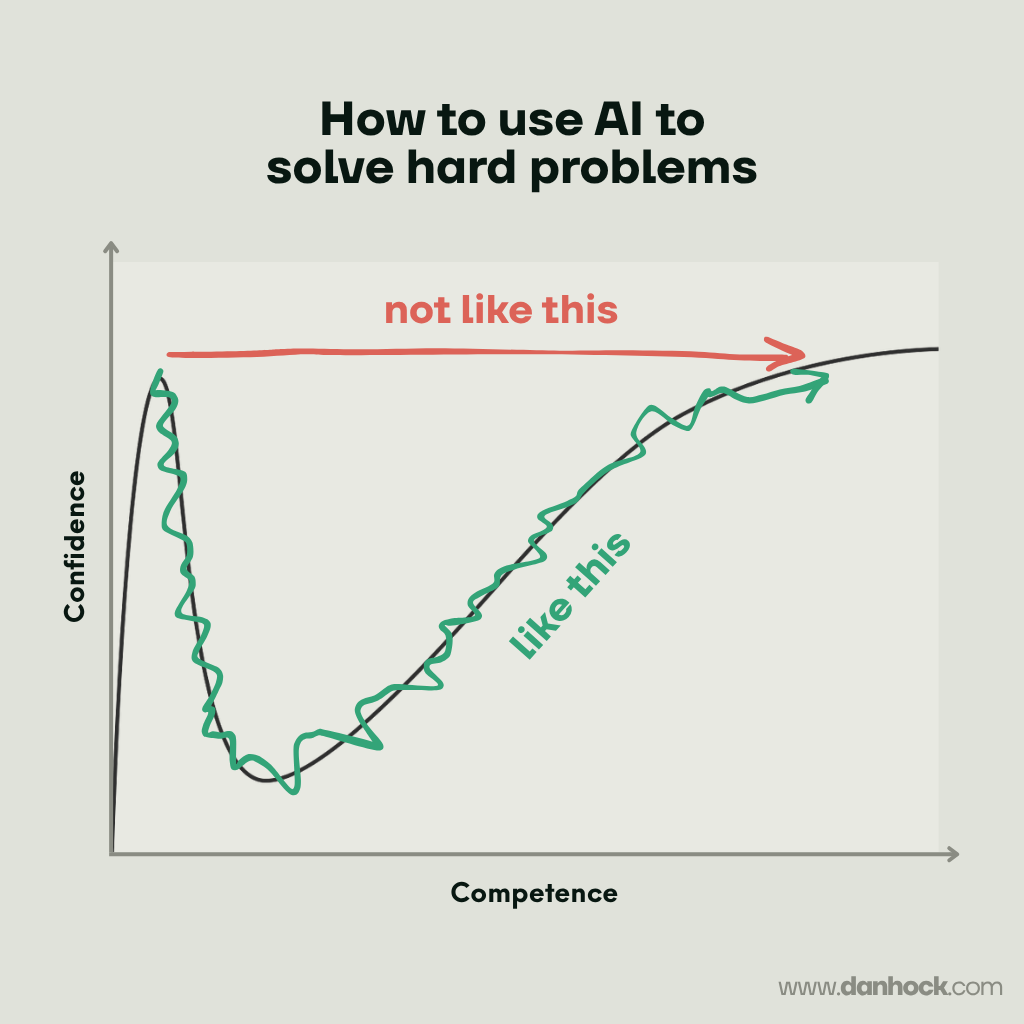

What you actually need to do is use AI to navigate through the complexity with you. It should look something like this:

For example, I used Opus 4.6 to research this post about which SaaS companies will be defensible against AI. Here is what that entailed:

I started by writing a draft of the essay cold, with no AI assistance

I gave Claude this draft, along with a mountain of other context, including the ~20 articles linked at the end of the piece. I asked it to synthesize what everyone else was saying and use it to critique the framework in my essay

I asked it to create a spreadsheet with the ~70 SaaS companies with >$1B in annual revenue, and iterated around the fields to fill out for each (category, business model, pricing, financial performance, market cap)

I asked Claude to score all of the companies against the framework to see if the more defensible ones had fared better in terms of financial performance and market cap in recent months (they had, but not as strongly as I hoped)

I then used that as a jumping-off point to iterate on the defensibility framework and re-score the companies until it was predicting their performance quite well

I started having Claude pull out specific company examples to cite in the post, asking it to pull in public filings, analyst reports, and other context to refine them

And so on, resulting in a thread with 300-400 back and forth questions which had to compact context 5-6 times.

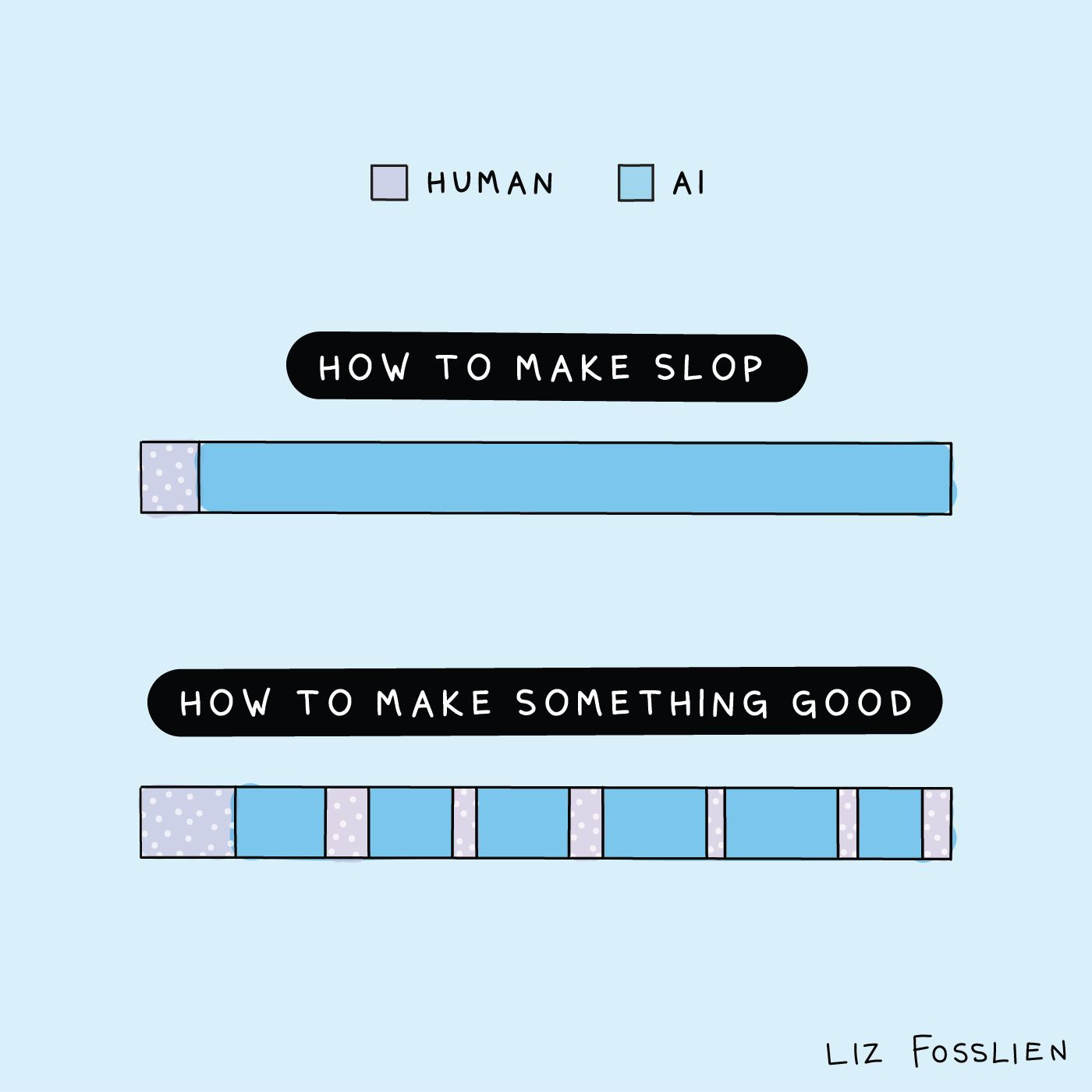

When we were done, my interaction with Claude looked like the bottom of this graphic from Liz Fosslien, just with many more “human” lines than could possibly fit on it:

My original essay and Claude’s initial critique of it were almost entirely unrecognizable from what I used in the final essay. I needed to wade through the complexity with it. Using it probably sped me up a bit, but the more important effect was that it deepened my understanding of the problem. That’s how to make AI an actual thought partner.

3. Which tools should I use?

Every day, you can go on X or LinkedIn and find five new tools that you “must” use if you don’t want to become part of the permanent underclass.

And yet despite all this hype, the vast majority of people are getting the vast majority of value out of just a few things today:

Core chatbots: Claude, ChatGPT, Gemini

AI on top of general productivity tools: Either directly embedded (e.g. Notion AI) or, increasingly, via connectors from the core chatbots

Domain-specific tools: e.g., Harvey for legal or Clay for sales

And for anyone more technical, Agentic coding tools (Cursor, Claude Code, Codex).

Because of the speed of progress, much of what you see beyond the most common tools are immature, unvetted, and highly likely to waste your time. As new products mature, the core use cases and workflows get worked out, those workflows get easier to use and less brittle, and a small number of products emerge as the category leaders.

If you’re trying products before any of that happens, you’re basically doing free user testing in exchange for potential alpha for being early. For most people, that is not a good trade.

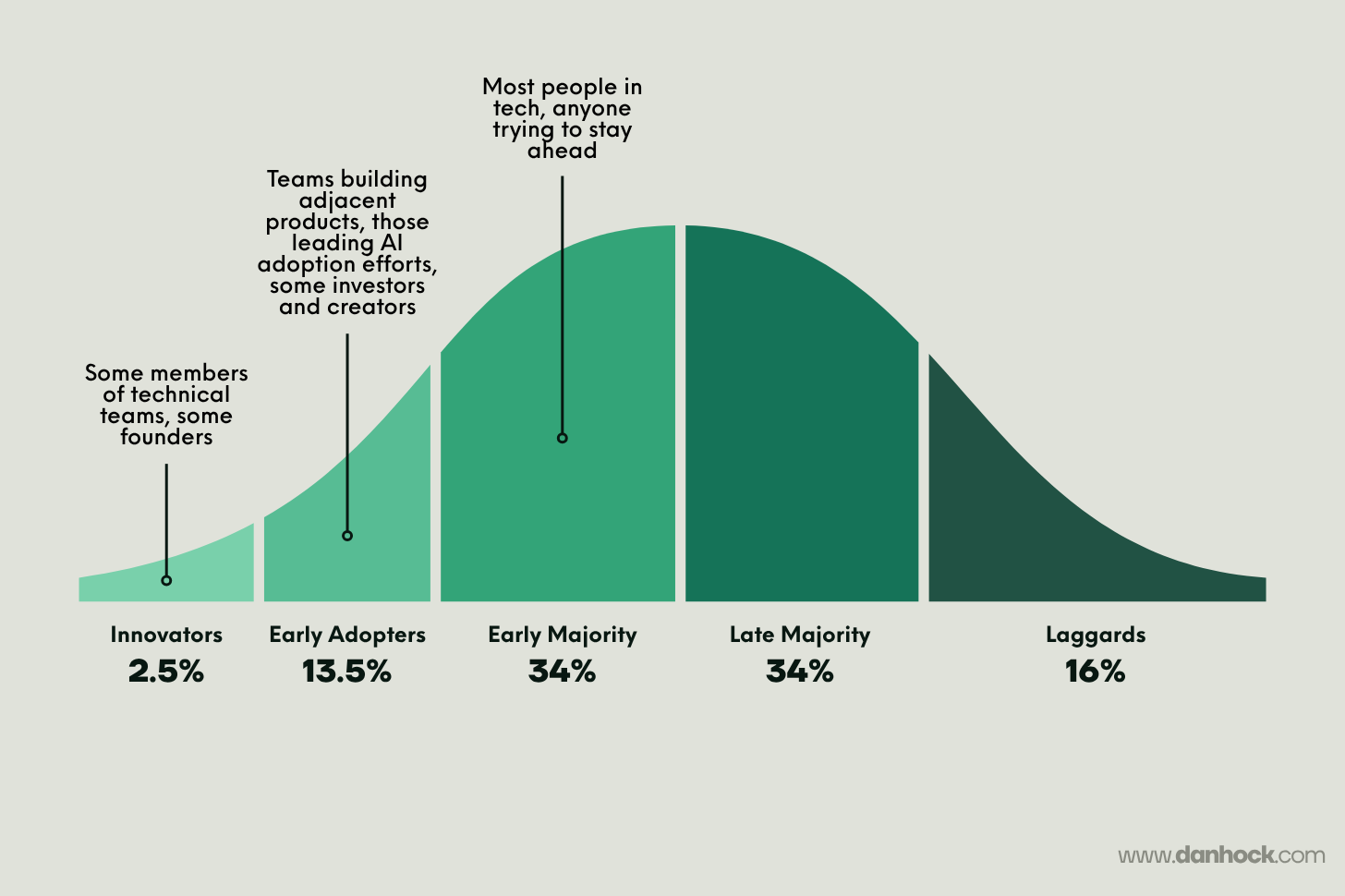

One way to reason about when you should actually adopt a new tool is the diffusion of innovations curve, originally popularized by Everett Rogers. Where you belong on this curve depends on what you’re trying to accomplish.

Innovators are typically those actively trying to apply a new technology. It may include founders and certain members of technical teams. Importantly, most of what they are doing is just trying to learn about the new technology, not trying to use it to make themselves more effective.

These tools are often hard to use, unformed, and present security or other risks. Despite this, they frequently go viral, causing many more people to try them than otherwise would. This was OpenClaw in November of last year. Today, OpenClaw (and alternative locally run agents being built by Anthropic and others) have moved into Early Adopter territory.

Early Adopters include teams building adjacent products, those leading AI adoption efforts inside companies, and creators and investors exploring an industry. A big part of the goal here is still learning, but the tools are starting to get more useful.

They often now look like “real products” but are still rough around the edges. Claude Cowork is in this territory now. Agentic coding tools like Claude Code or Codex were here in the second half of 2025, but for technical users are now in the Early Majority.

The Early Majority includes most people working in tech (certainly everyone in the product organization) and anyone else who is actively trying to keep their edge. This is where the goal crosses firmly from learning to being about improving performance.

Tools here are gaining real adoption with consumers or businesses, are easier to use, have established pricing models, and it’s often clear who the handful of winners in a category will be. They include things like AI meeting assistants (Granola, Otter), and research tools like NotebookLM or deep research modes in Gemini or Claude. The paid offerings of core chatbots were in the Early Majority a year or two ago, but are now in the Late Majority.

The same person can take on multiple of these personas, depending on their role and how they are trying to use the technology. Casey Winters has a good piece on how to think about this, which is worth reading here.

The most important thing is simply being intentional about where you sit and being willing to ignore the noise if it’s too early.

Into the future, serenely

Anxiety levels in tech are through the roof right now. It feels like things are moving at 1,000 miles per hour, and if you don’t keep up, you might do irreparable damage to your career.

One of the reasons it feels this way is that there are many people incentivized to push the fear narrative: people building or selling new products, investors in these products, and a legion of influencers trying to scare you into clicking.

It is true that AI will drive unprecedented gains for every knowledge worker in the world. But it is also being shoved on us with unprecedented speed and hype. New tech always rolls out more slowly and less completely than it seems like it will at first.

For most people, if they’re using a small set of the most common tools, engaging with them thoughtfully, and applying them to their most important problems, they’re going to be just fine.

Credits

Thank you to Casey and Gabriela for their feedback on this essay.

Probably the clearest diagnosis of where we are right now. Thanks for putting this together!

Thought-provoking article Dan. Great thoughts as always, and I really appreciated your perspective on productivity theater and being wary of pre-mature optimization with these tools.

You mentioned agentic coding tools as helpful for more technical individuals. Do you have any thoughts on the value of agents for non-technical users?